Product design

ML Ops Product redesign

Redesigning Fosfor’s MLOps platform to achieve ~ 30% operational efficiency gains

Product details

🎯 Summary

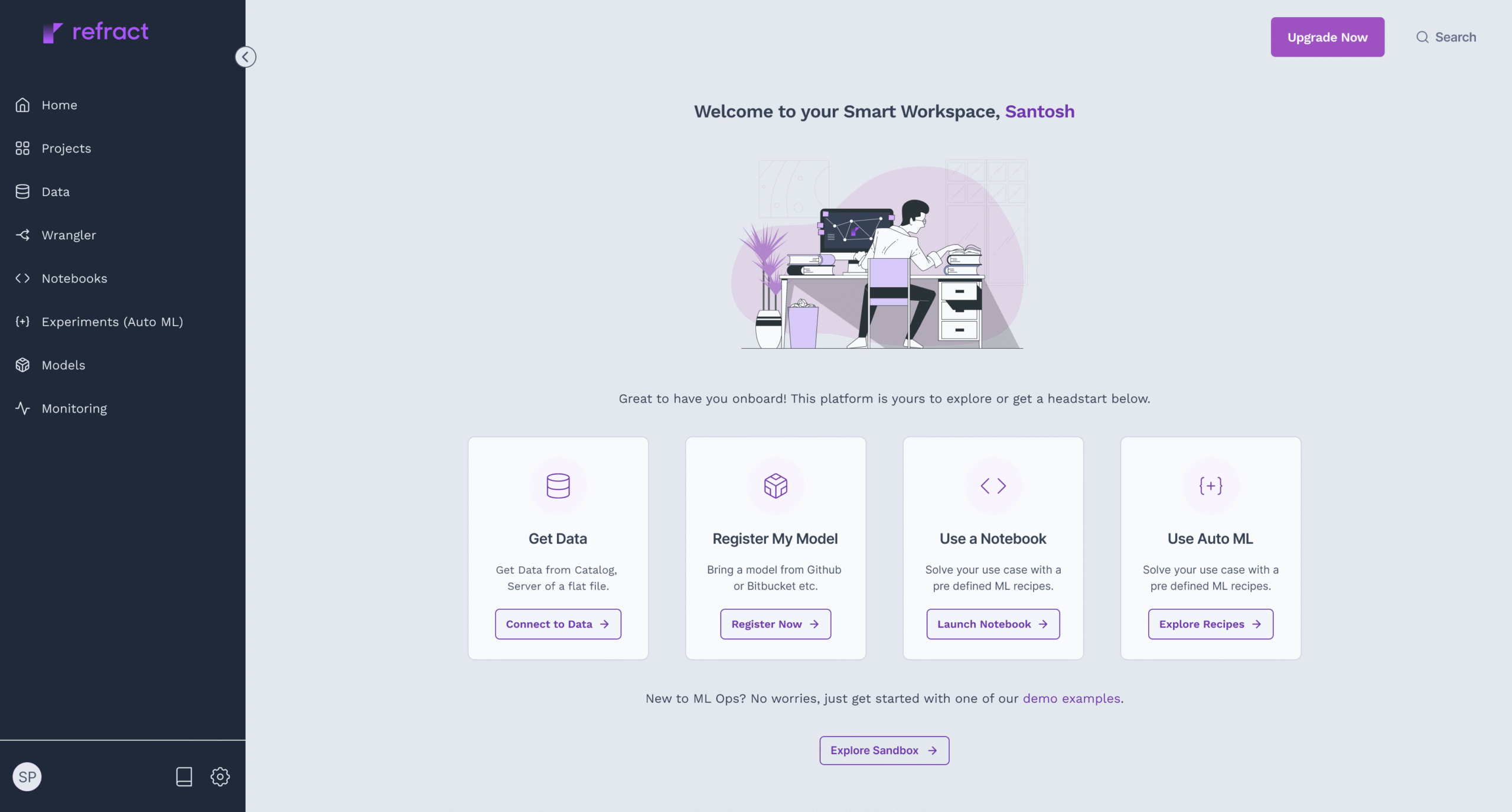

One of Fosfor’s core MLOps platform, Refract underwent a strategic redesign to streamline ML model deployment, reduce operational overhead, and improve data wrangling workflows. By benchmarking against industry leaders like Spell.ml (now acquired by Redit), Dataiku, Tableau and Trifacta (now acquired by Alteryx), the redesign introduced systemic improvements that reduced model deployment time, enhanced usability, and boosted overall platform Operational Efficiency Percentage (OEP) by ~ 30%.

💊 Problem statement

Despite offering robust infrastructure, Refract faced increasing friction from data scientists and ML engineers due to:

- Fragmented data wrangling workflows, requiring extensive manual intervention

- Deployment pipelines that lacked flexibility and transparency

- Inefficient resource orchestration, causing delays and over utilization

- Difficulty in version control and reproducibility, hampering experimentation

- A non-intuitive UI that constrained experimentation speed and productivity

These issues translated into longer model deployment cycles, operational silos, and suboptimal team productivity in comparison to competitive platforms.

🎻 Role and team

Leading the Design function.

- Defined the redesign roadmap in collaboration with Product and Engineering

- Conducted competitive analysis of Spell.ml, Dataiku, Tableau and Trifacta to extract key differentiators

- Led the UX and IA transformation of workflows including data wrangling, model experimentation, and deployment

During the alpha release design monitored the the Operational Efficiency Percentage metric, ensuring measurable ROI from design interventions

Team Composition:

- 2 Product designers

- 3 Frontend engineers

- 1 Data engineer

- 1 Product Manager

- 1 DevOps specialist

- 2 Backend engineers

🧠 Approach

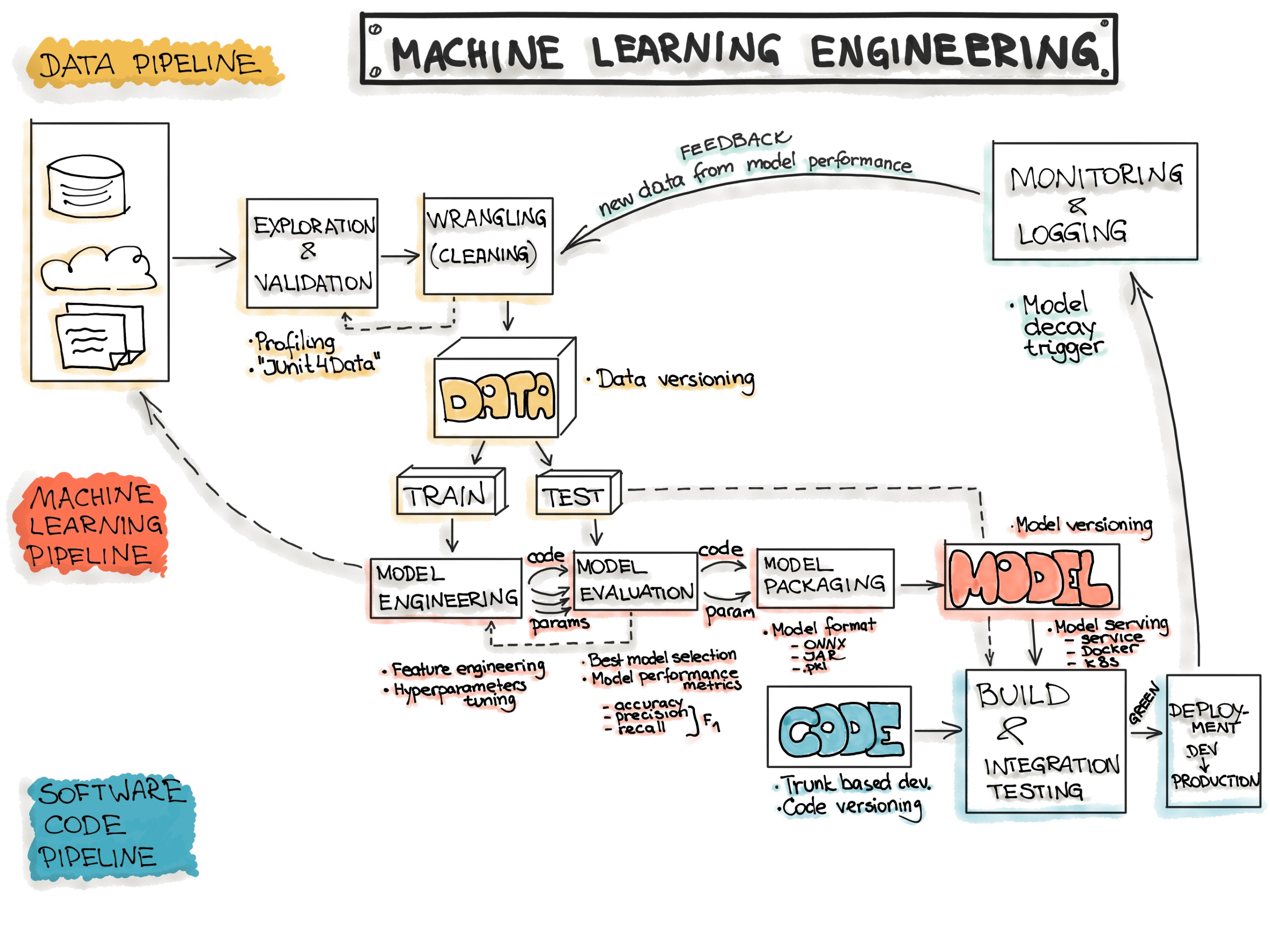

We followed a three-phase transformation strategy:

Phase 1: Platform Audit & Benchmarking

- Mapped existing Refract workflows

- Identified latency hotspots in model deployment lifecycle

- Compared orchestration flows, notebook integration, and metadata tracking against Spell.ml and Dataiku

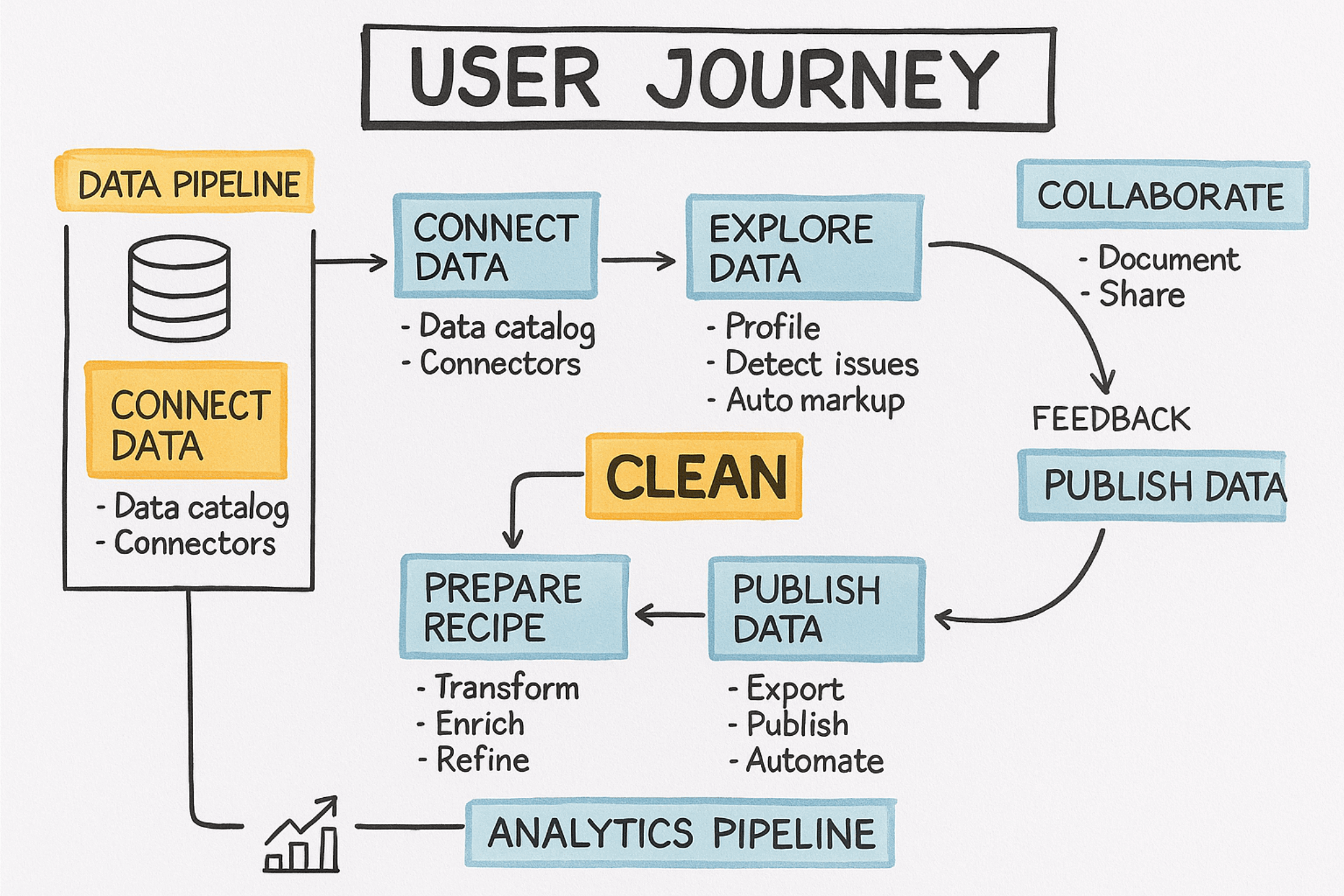

Phase 2: Data Wrangling Re-architecture

- Unified data prep flows into a drag-and-drop pipeline interface

- Enabled inline data validation, schema suggestions, and automatic type inference

- Introduced modular wrangling components for reusability and pipeline cloning

Phase 3: Deployment Acceleration

- Integrated one-click deployment workflows with rollback and auto-logging

- Replaced linear deployment flows with parallelized DAG-based orchestration

- Simplified environment control and experiment tracking for reproducibility

😓 Challenges

- Custom scripts written in Python/R which meant analysts and developers spent a disproportionately large amount of time (often 60-80% of a project's duration) on cleaning and preparing data, rather than on analysis or model building.

- As data volumes grew, manual methods and even custom scripting became incredibly cumbersome and inefficient.

- Performing effective data wrangling required strong programming skills (for scripting) or deep knowledge of SQL for database operations.

-

Identifying and rectifying errors, inconsistencies, missing values, and duplicates was a painstaking manual task

- Integrating data from various disparate sources (e.g., combining data from a relational database, a CSV file, and a web API) was a complex task that often required custom coding for each unique integration

-

Aligning with cross-functional stakeholders from MLOps, DevOps, and Data Engineering

-

Quantifying “operational efficiency” in a way that reflects both deployment speed and workflow usability

👌🏼 Solution

The redesign focused on deep structural changes and interface simplification to remove friction across the ML lifecycle:

✅ Key Improvements:

- Smart Data Wrangling Engine: Auto-tag data types, suggest column operations, and allow inline previews

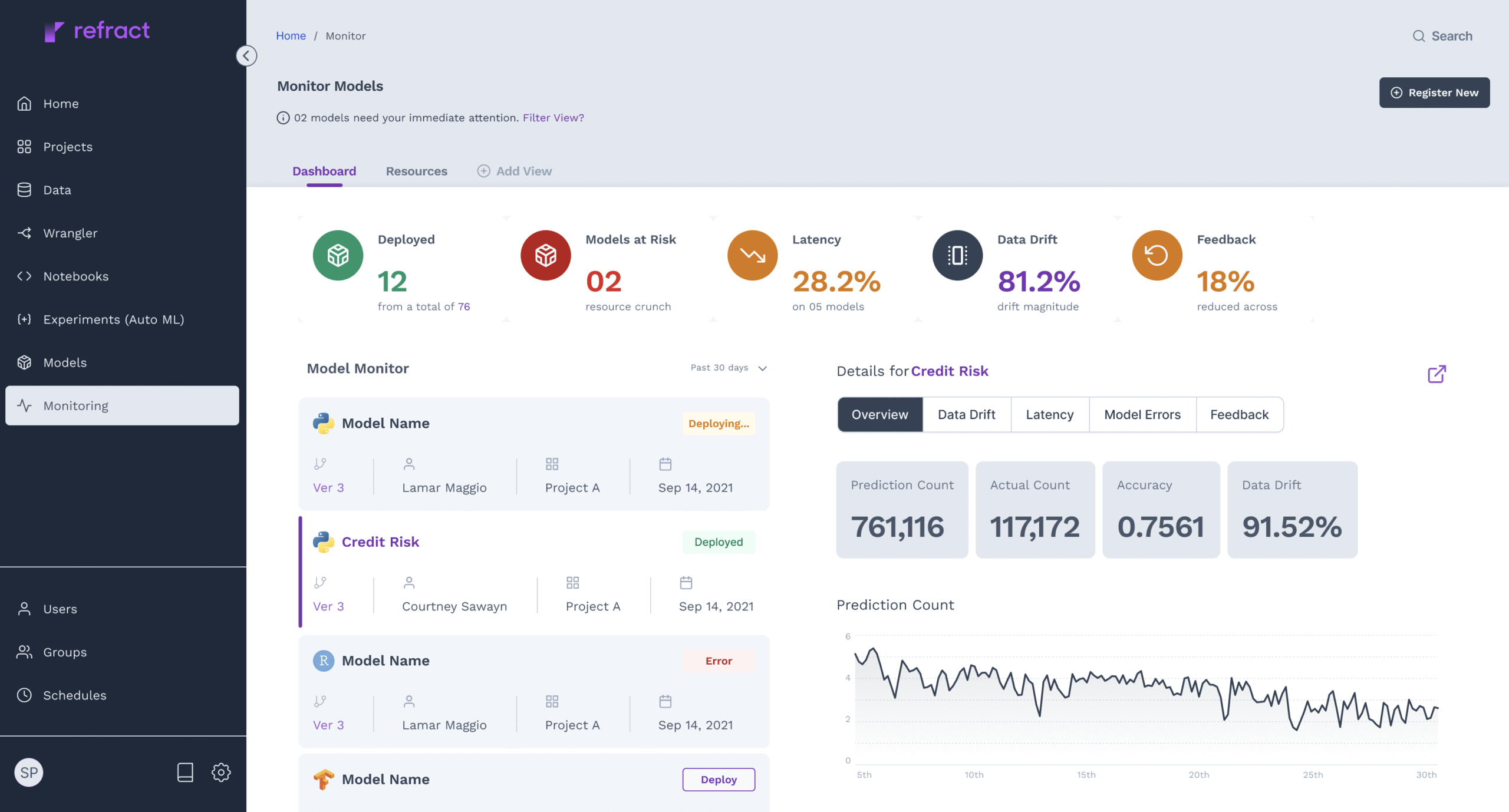

- Optimized Deployment Flow: Deployment time reduced by 30%. Templates for frequent deployment environments (dev, staging, prod)

- Intelligent Experiment Tracker: Visual version diff, automated logging, metadata-rich comparisons

- Modular ML Ops Console: Role-based access control, CI/CD hooks, model drift alerts

- Interactive Dashboards for monitoring pipeline health and runtime anomalies

- Unified Pipeline Designer: Visually connect data ingestion, transformation, and model training nodes

Results & Impact

(Estimated)

- ~30% faster in model deployment

-

~50% more accurate, using the improved data wrangling expereince

-

Extensive libraries of connectors for 50+ data sources, including traditional databases, cloud data warehouses, streaming data, APIs, and even unstructured data

- The intuitive visual interfaces with drag-and-drop functionality, enabled non data users to perform complex wrangling without writing code, and it even offered scripting capabilities for advanced users

- Capability to process and transform data in real-time as it arrives was crucial in building trust early in the cycle even before testing the model

The design change fundamentally reduced the onboarding friction how data wrangling in the larger scheme of ML ops was perceived, from a blocker to increased cross-team adoption of deployment templates and enhanced auditability and experiment traceability at a granular level.

.

Other products

Biofuels compliance

Role

Expereince design lead

Contribution

Designed a universal, scalable ingestion interface capable of adapting to evolving document formats.

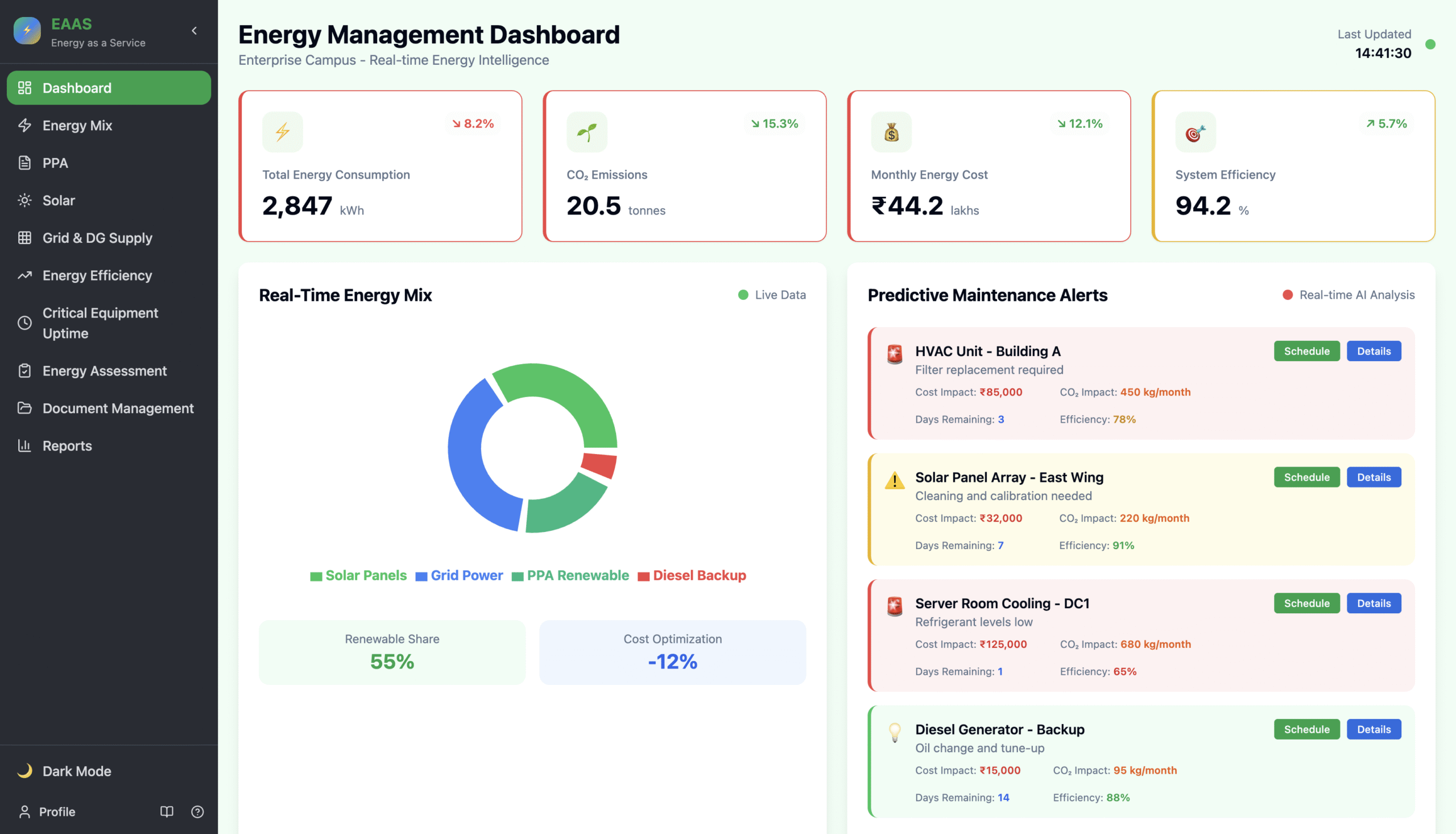

Energy management

Role

Expereince design lead

Contribution

Lead the design team from partner company and conceptualised the entire design of this modern energy management platform.